Your AI Pilot Worked. So Why Isn't Anything Changing?

The pilot worked. Leadership nodded. Six months later, nothing's changed. The distance between a successful AI pilot and organisational transformation is where most AI investment quietly dies.

Here's a scenario that plays out in mid-market businesses more often than anyone admits: a team runs an AI pilot. It works. Genuinely works — saves time, improves accuracy, impresses the people involved. A presentation gets made. Senior leadership nods approvingly. And then... nothing much happens.

Six months later, the pilot team is still using the tool. Nobody else is. The efficiency gain exists in a pocket. The organisation hasn't changed.

The pilot succeeded. The scaling didn't. And the distance between those two things is where most AI investment quietly dies.

Use cases aren't business cases

A use case proves that AI can do something useful. A business case proves it's worth reorganising around. These are fundamentally different propositions, and treating one as evidence for the other is where organisations get stuck.

A successful pilot tells you the technology works in a controlled environment, with motivated users, on curated data, with someone senior paying attention. What it doesn't tell you is whether the rest of the organisation is ready for what comes next: changed workflows, new skills requirements, different ways of making decisions, and the cultural willingness to trust a system that wasn't there six months ago.

We've written about why transformations fail the departure test — and the same structural problem applies here. If your AI success depends on specific enthusiasts rather than organisational infrastructure, it's a project, not a capability.

The three gaps

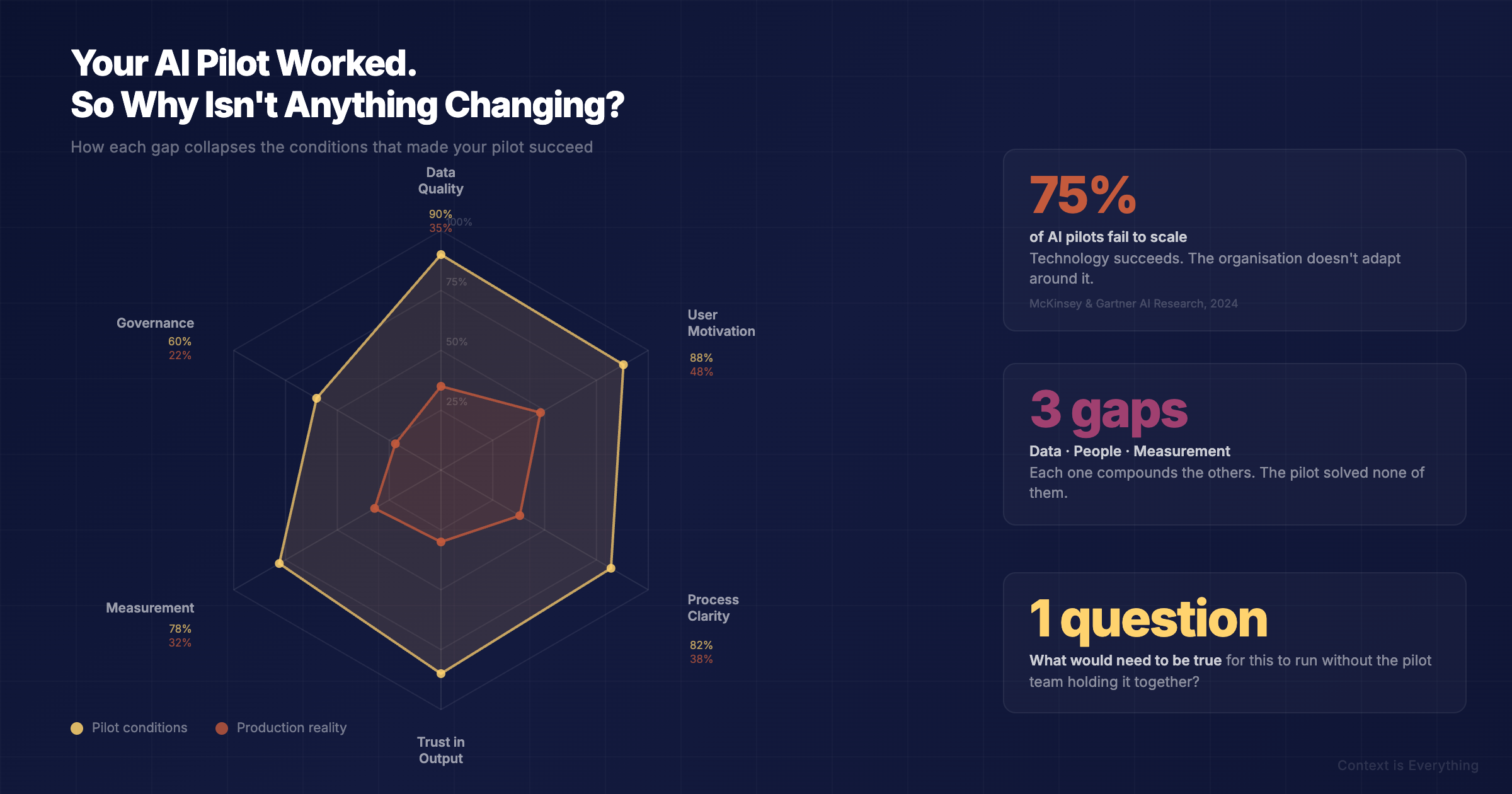

From what we've seen, the distance between a working use case and a functioning business case usually comes down to three things:

The data gap. The pilot used clean, curated data. Scaling means dealing with the data you actually have — inconsistent formats, missing fields, information trapped in email threads and people's heads. Most technology stacks add less value than people think, partly because the data flowing through them was never fit for purpose.

The people gap. The pilot team understood what the AI was doing and trusted the outputs. Scaling means people who didn't build it, don't fully understand it, and have legitimate questions about whether it's going to make their job harder. Adoption isn't a training problem. It's a trust problem — and trust requires evidence, not just a memo from leadership.

The measurement gap. The pilot measured what the tool could do. The business case needs to measure what the organisation gained. Those aren't the same metric. Time saved in one process means nothing if it doesn't translate to capacity released, revenue generated, or risk reduced at a level the board cares about. We've covered how AI actually delivers value — and the honest answer is that it's specific, measurable, and rarely as dramatic as the pilot suggested.

What bridging the gap actually requires

It requires the work that isn't exciting enough to make it into vendor presentations.

It means governance — not as bureaucracy, but as clarity about who's responsible for AI outputs and what happens when they're wrong. (The courts have already decided that "the AI got it wrong" isn't a defence.)

It means skills development that goes beyond prompt engineering workshops and addresses the harder question of how teams work alongside AI systems day to day.

It means honest conversations about which processes are worth scaling and which pilots were interesting experiments that proved a point but don't justify the investment to go further.

And it means accepting that the organisational change required to scale AI is bigger than the technology change — which is exactly the part that gets underestimated every time.

The question worth asking

If your organisation has successful AI pilots but hasn't meaningfully scaled any of them, the diagnostic question isn't "what's wrong with the technology?" It's:

"What would need to be true — about our data, our people, and our way of working — for this to run at scale without the pilot team holding it together?"

That question tends to surface the real blockers. And they're almost never technical. The AI readiness calculator scores your organisation across data, people, and governance — the three areas where pilots fail to scale.

---

For a practical look at the journey from experimentation to production AI, see From ChatGPT Experiments to Production AI. For guidance on choosing the right processes to scale, see Identifying High-ROI Processes for AI Automation.

Related Articles

You Bought the AI. Do You Know What Problem It's Solving?

Organisations buy AI platforms and then try to work out what they're for. This is backwards — and expensive. Why context before technology is the only approach that actually leads somewhere.

Identifying High-ROI Processes for AI Automation

Most people intuitively know which tasks are too complex, too arduous, or too boring for humans alone. We've found high-value processes fall into three categories — and picking one from each is the fastest way to prove AI value.