The Verification Debt Nobody Budgeted For

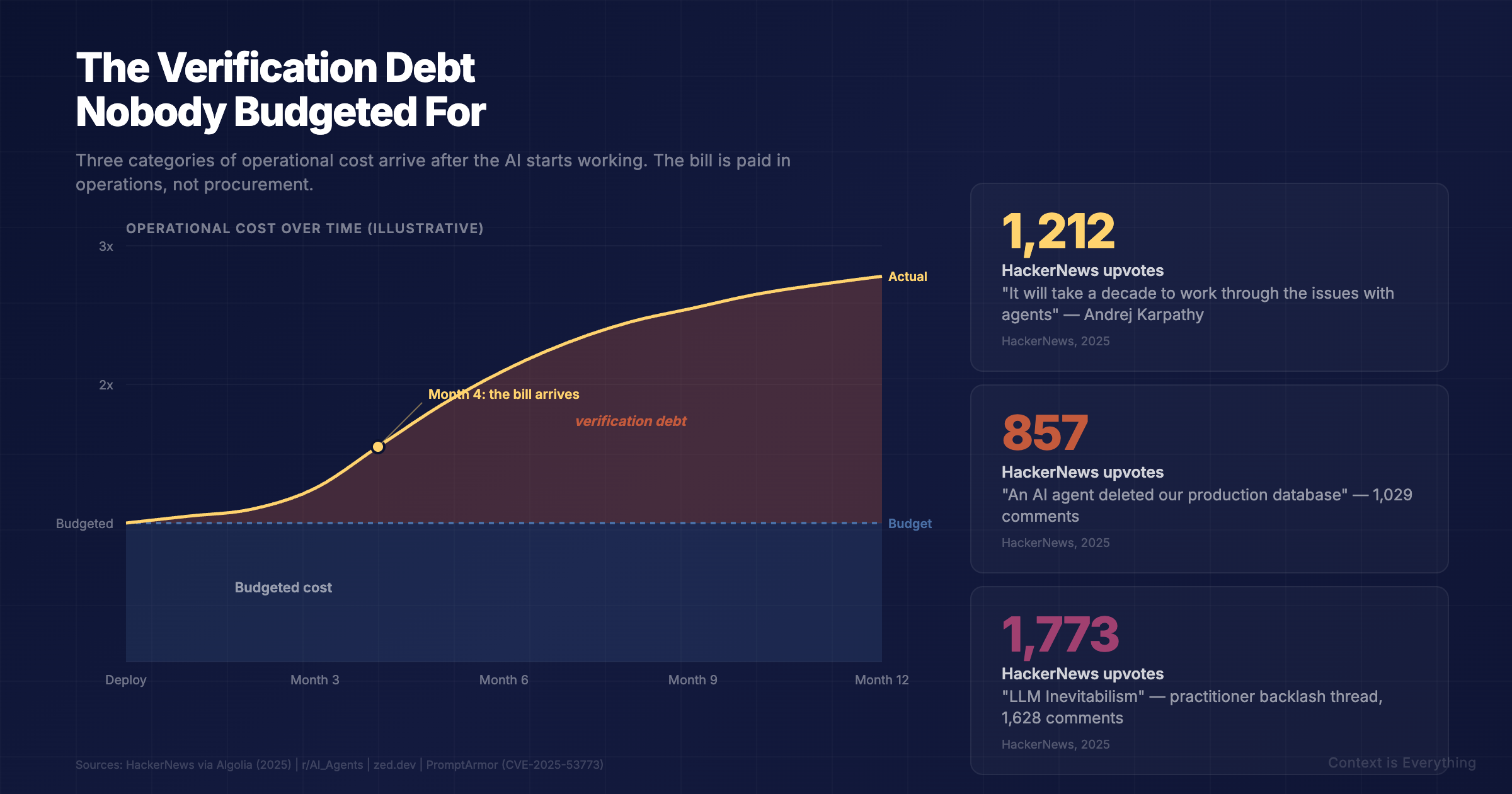

The agent worked in the demo. The bill arrives in month four. Verification debt, comprehension debt, and operational debt are the three categories of operational cost that nobody budgeted for. Practitioners are talking about them constantly. Buyers are not.

The agent worked in the demo. The pilot went green. The dashboard shipped.

Three months in, someone notices a customer report has the wrong number in it. Then another. Then a Slack message from procurement: the supplier comparison missed a £40k clause. Now you need someone to check every output the agent produces, in case it does it again.

You just discovered verification debt.

It is the bill nobody put in the budget. The hours your team now spend checking, rechecking, and tracing back what the AI produced. The senior person you cannot free up because they are the only one who can spot the subtle errors. The pipeline you cannot trust without a human in the loop, which was the entire point of building it.

Verification debt is one of three flavours of operational debt that arrive after the AI starts working. Practitioners are talking about them constantly. Buyers are budgeting for none of them.

The three debts

Verification debt. Outputs you cannot trust without checking. The model is confident, the answer is plausible, but you have learned (usually the hard way) that confidence is not accuracy. So now every output gets checked, by a human who could otherwise be doing the work.

Comprehension debt. Code, configs, and content the AI generated that nobody on your team fully understands. It works. Until it breaks. Then you are trying to debug an architecture nobody designed.

Operational debt. Silent failures. Edge cases. Token bills that quietly triple. Agents that drift. The observability you would build for any other production system, but did not build for this one because the demo went so well.

Why nobody budgeted for it

Because the conversation everyone is having is about whether the AI works. Will the model do the thing? Can we get accuracy above X? Will the pilot pass?

The conversation almost nobody is having is what happens after it does work, when you have to live with what it produced. The procurement decision was made on capability. The bill arrives in operations.

It is a structural blind spot in how AI projects are scoped. The vendor sells the model. The integrator sells the deployment. Nobody sells you the verification, the comprehension, or the observability layer, because nobody owns the consequence of leaving it out.

The HackerNews comment threads are full of these. "An AI agent deleted our production database" hit 857 upvotes and over a thousand comments. Karpathy's note that it will take a decade to work through the issues with agents got 1,212. "Why LLMs can't really build software" got 862. These are not hot takes. They are operators describing what month four looks like.

The architectural fix

Verification debt is not inevitable. It is the consequence of building agents the same way you would build a script: source documents in, model out, hope for the best.

The alternative is architectural. Ringfence the context. Inject only verified data. Let the model generate narrative around facts it cannot invent. Let code handle the deterministic checking. The agent becomes structurally incapable of making things up, because there is nothing left to make up.

We have been running this pattern for a year for a financial consultancy. Seven specialist agents, sixty-eight individually crafted prompts, eighty-two slide presentations. Zero hallucinated numbers. The financial figures are extracted from source spreadsheets and placed; never generated. Verification happens in code, not by a human reading every output.

The cost is upfront, in design. The saving is permanent, in operations. (Full case study here.)

What to do about it

If you are scoping an AI deployment now:

If you have already deployed and you are seeing the bills come in:

The conversation about AI is shifting. The question is not whether the model works. The question is what it costs to live with what it produces.

Score your current AI approach against the patterns associated with reliable, low-debt deployments.

Related Articles

Seven Ways to Stop Your AI From Making Things Up

AI hallucinations cost businesses real money. Hallucination rates have dropped from 38% to 8%, but you can push that lower with these practical techniques.

The Hard Part Isn't the AI

How we built an agentic workflow that turns months of manual analysis into auditable, presentation-ready reports — and why understanding the domain was harder than building the AI.

Your AI Pilot Worked. So Why Isn't Anything Changing?

The pilot worked. Leadership nodded. Six months later, nothing's changed. The distance between a successful AI pilot and organisational transformation is where most AI investment quietly dies.

You Bought the AI. Do You Know What Problem It's Solving?

Organisations buy AI platforms and then try to work out what they're for. This is backwards — and expensive. Why context before technology is the only approach that actually leads somewhere.