The Hard Part Isn't the AI

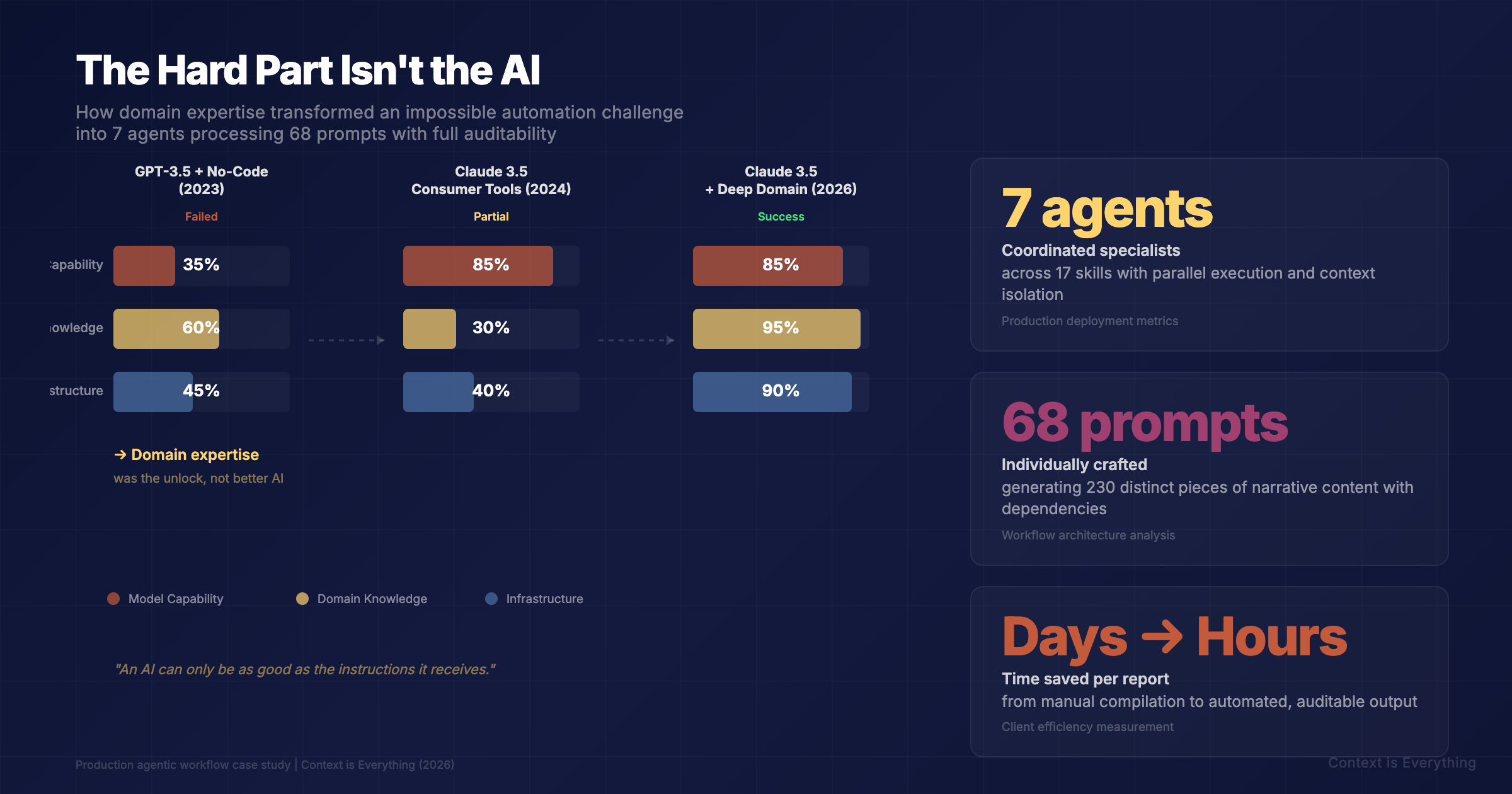

How we built an agentic workflow that turns months of manual analysis into auditable, presentation-ready reports — and why understanding the domain was harder than building the AI.

The Real Challenge

"The MP produces a DDA for the RA."

If that sentence means nothing to you, you're in good company. But in our client's world, it's Tuesday. Every industry has its own dialect — acronyms stacked on acronyms, shortcuts forged over decades of practice, meaning compressed into shorthand that only insiders can parse.

This is the reality of complex, regulated businesses. And it's exactly the kind of environment where the instinct is to throw AI at the problem. The logic seems sound: we have spreadsheets, financial analyses, charts, tables, and a mountain of reference documents. Modern AI can process text. Why not automate the whole thing?

Because when you ask an AI to process documents it doesn't understand, it produces confident, plausible nonsense. And when you're working with financial data — real numbers attached to real decisions — plausible nonsense isn't just embarrassing. It's dangerous.

What We Built

The deliverable is a compiled financial assessment report — over a hundred slides, presentation-ready, with charts, tables, benchmarks, and written narrative. The source material is a collection of complex spreadsheets and reference documents. The output is something a senior consultant can review and present, not something they need to reconstruct.

The proprietary process behind this sits entirely in Excel. Activity-based accounting methodology, industry benchmarks accumulated over decades, qualitative and quantitative surveys, complex pivot tables — this is the intellectual property of consultants who are leaders in their field. We didn't replace any of it. We built the automation layer that takes the outputs of their expertise and transforms them into the final product.

The pipeline works in stages with managed dependencies. Data extraction feeds into hundreds of individually crafted prompts. The architecture is multi-level: raw data feeds level-one prompts, those outputs feed level-two summaries, which feed level-three executive narratives. Every dependency is explicit.

Every piece of generated content carries full provenance. Which source document. Which data point. How it was processed. The audit trail is a first-class deliverable — because when you're working with financial data, the ability to prove where every number came from is non-negotiable.

And critically: zero tolerance for hallucinations. Financial figures are extracted and placed, never generated. The AI writes narrative around verified data. It does not invent data.

Why It Failed Before

A year ago, we built the first version using Bubble.io and GPT-3.5. The orchestration worked — we could wire stages together, move data between steps, trigger actions in sequence. But the LLM couldn't do the job. It couldn't cross-reference across documents. It couldn't handle the domain jargon. It couldn't maintain consistency across hundreds of prompts.

Fast forward to today. We ran a deliberate benchmark: could a modern consumer AI tool handle this workflow? We spent four hours before calling it. The model itself was capable — the raw intelligence wasn't the problem. But the tooling collapsed under the weight of the task. Context limits. No filesystem access. No persistent memory between steps.

Complex, multi-stage, domain-specific agentic workflows need purpose-built infrastructure. They need filesystem integration, persistent context, orchestration logic, and the ability to manage hundreds of operations without losing track. General-purpose chat interfaces aren't designed for that.

The Real Foundation

Here's the contrarian claim: the hardest part of building an agentic workflow isn't the AI, the code, or the architecture. It's understanding the domain.

Without knowing what "The MP produces a DDA for the RA" means — really knowing, not just expanding the acronyms — no amount of prompt engineering will save you. Every synonym, every abbreviation, every implicit assumption baked into decades of client process had to be understood before a single line of code was written.

There are no spring chickens on this team. Business modelling, operations, financial analysis, process mapping — this knowledge was built over careers, not bootcamps. When you're staring at a workbook with thirty tabs of pivot tables and activity-based costings, grey hair is an asset.

An AI can only be as good as the instructions it receives. If you don't understand the business, your prompts are garbage — and your output is confident garbage.

We didn't start with code. We started with questions. What does this spreadsheet actually mean? What's the relationship between these tabs? Why is this benchmark structured this way? Six hours with coffee and a whiteboard — two people who understood both the technology and the business, sketching the process, mapping dependencies. That was the foundation.

The Result

7 specialist agents coordinated across 17 skills process 53 extracted data tables, execute 68 individually crafted prompts that generate 230 distinct pieces of narrative content, render 62 tables and diagrams, place 91 charts and images, and assemble it all into an 82-slide presentation — with every single output traceable to its source.

The practical impact is measured in days. The manual version consumed days per person per week. Experienced consultants spending their time on compilation and formatting instead of analysis and client work. That time is now returned to them.

What This Means

A year ago, we couldn't build this. The models weren't capable enough. Today, 7 agents coordinate across 68 prompts to produce an auditable, presentation-ready report from raw spreadsheets — and every number can be traced back to its source.

But the technology was the straightforward part. The months of work were spent understanding a client's business deeply enough to encode it — sitting with their spreadsheets, learning their jargon, mapping their processes, and asking the questions that only experience teaches you to ask.

If your organisation has complex, domain-heavy document workflows that consume specialist time, this is now solvable. Map where AI is likely to deliver the highest ROI in your workflows before committing to a build. The question isn't whether the AI is smart enough. It is. The question is whether the people building the workflow understand your business well enough to get it right.

Related Articles

The Hidden Complexity: Why AI Tuning Determines Everything in Professional Services

Two identical consulting analyses. Same frontier AI model. Wildly different results. What changed? Everything invisible. The difference between accurate AI and expensive mistakes comes down to configuration choices end users never see.

The Irreplaceable Expert: What Margaret Knows That Your AI Doesn't

Every organisation has a Margaret. The person who knows not just what happened, but why it matters. Here's what happens when organisations try to replace her with AI: the technology works. And that's precisely the problem.