The Irreplaceable Expert: Why AI Without Human Oversight Fails in High-Stakes Consulting

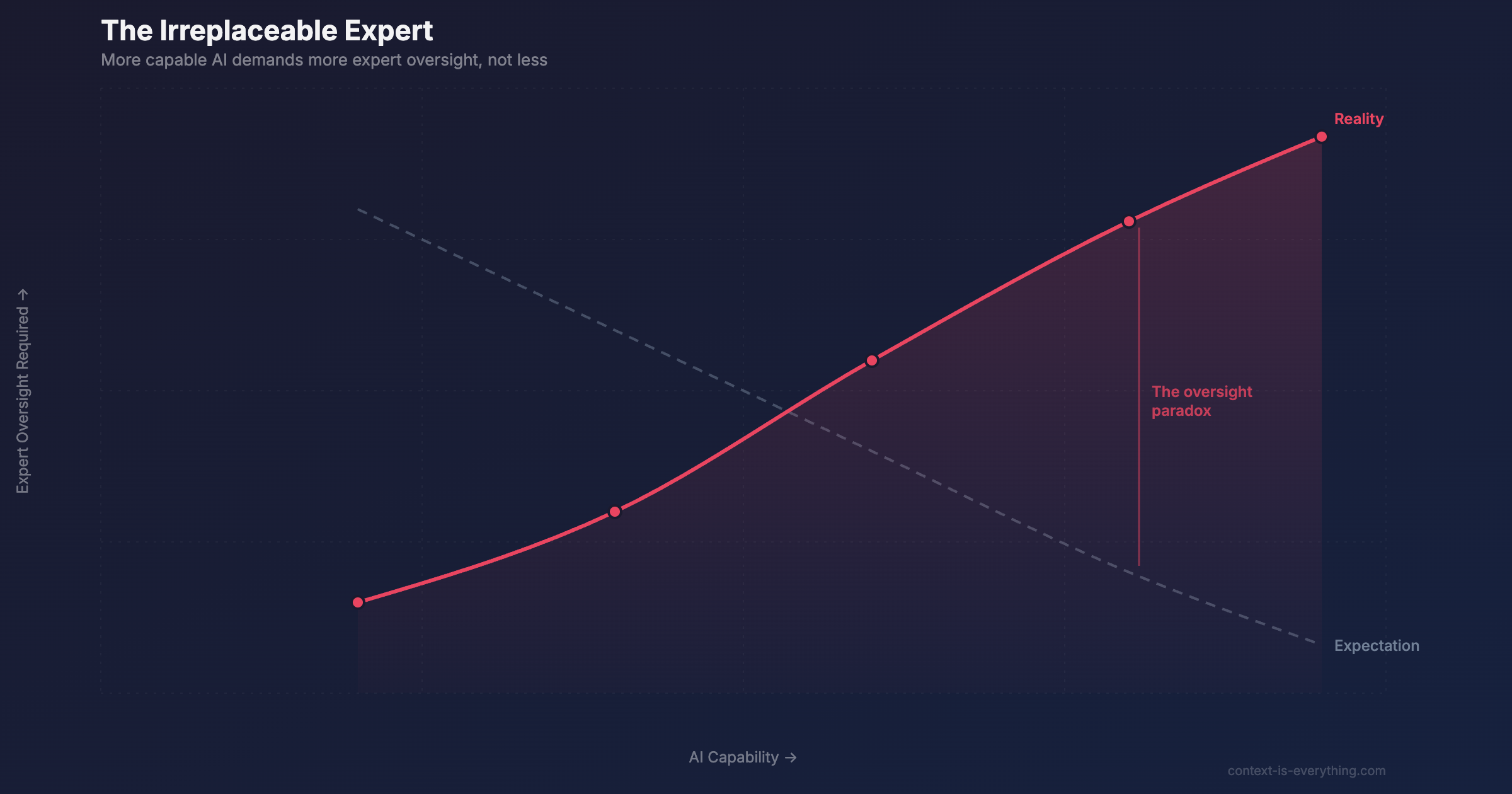

As AI gets better, you would expect to need less human oversight. The opposite is true. When AI is 95% correct, the 5% that is wrong is camouflaged by the quality of everything around it. The oversight paradox in high-stakes professional services.

As AI gets better, you would expect to need less human oversight. The opposite is true.

When AI was visibly limited, the rough outputs forced everyone to check the work. The errors were obvious. Now AI produces fluent, confident, well-structured analysis that reads as if an expert wrote it. That is precisely what makes it dangerous in professional services. When AI is 95% correct, the 5% that is wrong is camouflaged by the quality of everything around it.

This is the oversight paradox. The more capable the output looks, the harder it is to catch the subtle misinterpretations that slip past casual review. The risk is not hallucination, which is often obviously wrong. The risk is confident output that is wrong in ways only a domain expert can see.

How do you clone Margaret?

Every organisation has a Margaret. The person who knows not just what happened, but why it matters. The one auditors want to talk to. The one every new starter needs to find. Not on the org chart as Chief Knowledge Officer. Just Margaret.

The technology to replace her works. That is precisely the problem.

The honest answer to how you clone her: you can't. Not directly.

But organisations keep trying. The most common approach is to deploy an AI tool and hope it picks up what she knows. It identifies patterns. It synthesises documents. It produces confident, well-structured analysis that reads like an expert wrote it.

And that's exactly where things go wrong.

Because Margaret doesn't just identify patterns. She knows what they mean. That's a different thing entirely. And the gap between those two capabilities is where AI implementations quietly fail.

Three times the AI wasn't Margaret

Financial due diligence. An AI flagged a revenue recognition pattern as a red flag, the kind of finding that changes a deal assessment. Margaret, in this case a sector specialist with fifteen years of deal experience, recognised it immediately as standard practice in that industry vertical. The AI saw an anomaly. Margaret saw a convention. A sound deal nearly got killed.

Pharmaceutical review. AI identified a statistical signal in adverse event data that looked like a safety concern. The regulatory specialist on the team, the one who'd spent a decade reviewing datasets exactly like this, knew it was a reporting artefact. Something that consistently produces noise in this type of data. The AI had no way to know that. It flagged it anyway, with confidence.

Candidate assessment. AI read a career gap as a risk indicator and downgraded a candidate accordingly. The experienced assessor read the same gap as a strategic career pivot, a deliberate move made by senior professionals who know what they're doing. Same data. Opposite conclusion.

In each case the AI wasn't wrong about the pattern. It was wrong about what the pattern meant. Because meaning requires context. And context lives in Margaret.

What Margaret actually holds

There's a term for it: institutional knowledge. The accumulated understanding that doesn't live in any document, doesn't appear in any system, and doesn't transfer automatically to anyone who joins after her.

It's the why behind the what. The history that makes the present legible. The professional judgement that comes from having seen this situation before, in this sector, with this kind of client, and knowing how it turned out.

AI trained on public data doesn't have it. It has everything that's been published. It doesn't have what your firm has learned from years of confidential work that was never written down.

The organisations that figure out how to make that knowledge durable, accessible and queryable, will have an advantage that compounds. Not because their AI is better. Because their context is deeper.

So what happens to all the Margarets?

Her job doesn't disappear. It changes.

A consultancy that previously spent a week on manual synthesis can now get AI-generated analysis in 20-40 minutes. That time doesn't go back into the budget. It goes into Margaret, into the interpretation, the calibration, the judgement that the AI genuinely cannot provide.

Done right, the relationship looks like this: AI handles the breadth, Margaret handles the depth. AI processes the volume, Margaret reads the meaning. AI produces the draft, Margaret makes it reliable.

Remove Margaret from that equation and you don't have a faster process. You have a confident process that's wrong in ways that are very hard to spot.

In professional services, where decisions affect careers, organisations, and financial outcomes, that's not a minor efficiency problem. It's the whole thing.

Is your organisation ready for AI-augmented work? The answer depends on whether you've thought seriously about who your Margaret is, and what happens when she's not there.

Related Articles

The Two Moats: Why Consultancies' AI Advantages Are Structural, Not Timing

Most professional services firms are still asking 'should we explore AI?' The firms pulling ahead are already in production. But the advantage isn't timing — it's structural. Two competitive moats are forming that can't be bought, replicated, or rushed: private data and custom tooling.

The Hidden Complexity: Why AI Tuning Determines Everything in Professional Services

Two identical consulting analyses. Same frontier AI model. Wildly different results. What changed? Everything invisible. The difference between accurate AI and expensive mistakes comes down to configuration choices end users never see.

Why Most AI Projects Fail (And What the 5% Do Differently)

MIT's Project NANDA found 95% of enterprise AI pilots deliver zero return. Companies have invested £30-40 billion with nothing to show. But 5% achieve rapid revenue acceleration. The difference isn't the technology - it's implementation and context.