Shadow AI vs Shadow IT: Why This Wave Is Different

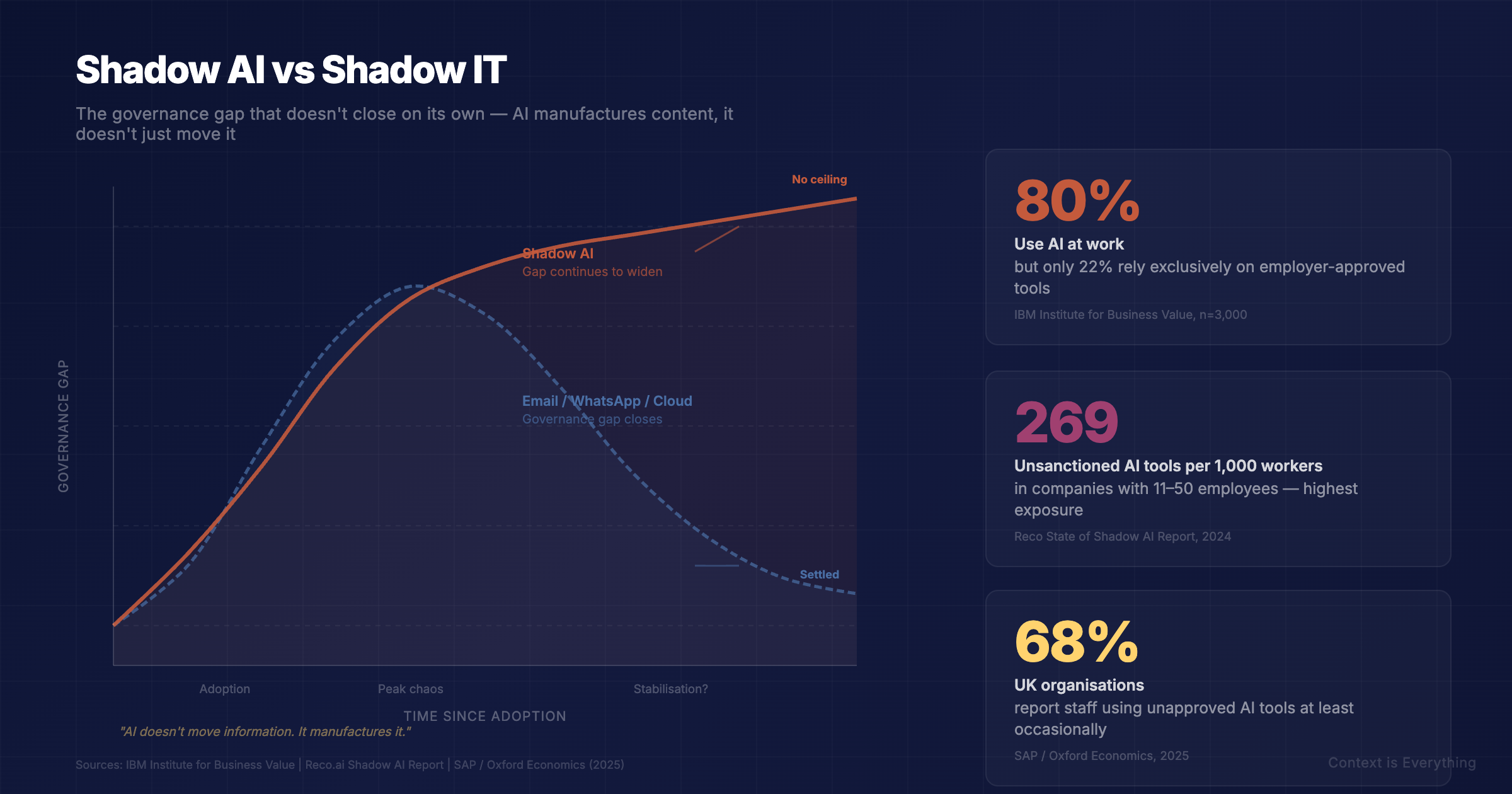

80% of workers use AI at work. Only 22% use employer-provided tools. Shadow AI is not the same problem as shadow IT, and the governance playbook that worked before will not work here.

You have likely already read a comparison piece around shadow AI and shadow IT. Everyone seems to have written it: employees adopt unsanctioned tools, organisations scramble to catch up, governance arrives late.

We saw it with email, with WhatsApp, with cloud services, with personal devices. The pattern is well documented and the parallel is obvious.

But there is a reason the parallel breaks down, and almost nobody is talking about it.

Every previous wave of unsanctioned workplace technology eventually found its equilibrium. Email was chaos, then we got policies, archiving, and cc etiquette. WhatsApp was chaos, then GDPR forced governance. The technology was too useful to ban. Organisations adapted. Rules emerged. Things settled.

Shadow AI may be the first wave that does not settle on its own.

How is shadow AI different from shadow IT?

The Italian programmer Alberto Brandolini observed that the energy needed to refute bullshit is an order of magnitude greater than the energy needed to produce it. He was describing misinformation, but the principle maps precisely onto what is happening with AI in the workplace.

Previous waves of unsanctioned technology moved information around. Email moved documents. WhatsApp, WeChat and Slack moved conversations. The information already existed, it just ended up somewhere the organisation had not planned for. The governance challenge was essentially a plumbing problem: redirect the flow, and things stabilise.

AI does not move information. It manufactures it. Analysis, recommendations, structured arguments, client-ready documents, produced at near-zero cost, in seconds, with no audit trail and no guarantee that a human has reviewed the output before acting on it.

An IBM-sponsored Censuswide survey of 1,000 American office workers (February 2026) found that 80% now use AI in their roles, but only 22% rely exclusively on employer-provided tools. Nearly 40% said they prefer external tools because the features are simply better.

A separate IBM Canada study of 3,000 Canadian office workers (September 2025) showed similar shape: 79% using AI at work, but only 25% relying on enterprise-grade tools.

This is not sabotage. It is pragmatism.

But when the cost of producing plausible-sounding content collapses to near zero and the cost of properly verifying it remains stubbornly human, the gap does not close over time. It widens. That is the asymmetry, and it is why this wave is structurally different from the ones before it.

Shadow AI vs shadow IT at a glance

| Dimension | Shadow IT (email, cloud, personal devices) | Shadow AI (ChatGPT, Copilot, Gemini) |

|---|---|---|

| What it does | Moves information that already existed | Manufactures new information |

| Primary risk | Where the data goes (leakage, exfiltration) | What comes back (unverified output entering decisions) |

| Audit trail | Recoverable from logs and storage | Often non-existent once output is pasted into a deliverable |

| Resolution path | Plumbing: route the flow back inside the perimeter | No equivalent: manufactured output cannot be retrieved |

| Governance maturity | Settled within 5 to 10 years per wave | No prior equilibrium to copy from |

The implication is simple. Shadow IT could be solved with policy, perimeter and patience. Shadow AI cannot.

Where this hits hardest

Reco's 2025 State of Shadow AI report, which analysed enterprise customer telemetry rather than survey responses, found that organisations with 11 to 50 employees face the highest exposure: an average of 269 unsanctioned AI tools per 1,000 workers, and 27% of employees in that cohort using unsanctioned AI tools. These are exactly the organisations least equipped to monitor or manage it.

For professional services firms and consultancies, the risk is not primarily data leakage. It is reputational. When staff augment their expertise with unvetted tools and present the output as the firm's own work, the quality assurance framework that clients are paying for has a hole in it that nobody can see.

Consider a worked example. A senior consultant pastes a client's draft strategy into ChatGPT and asks for a critique. The output is sharp. Some of it goes into the next slide pack, with light editing. The client receives advice that is partly the firm's, partly the model's, and entirely uncited. If that advice is wrong, no one in the chain has a record of how it was produced. The professional indemnity insurer was never consulted. The client never knew the question existed.

That is not a hypothetical. It is happening every week, in every consultancy, somewhere.

Separately, SAP and Oxford Economics research, surveying 1,600 senior executives across eight global markets (including 200 UK respondents), found 68% of organisations report staff using unapproved AI tools at least occasionally. The same research found 44% of businesses have already seen data or IP exposure as a result. As one commentator put it, this is not a sign of resistance. It is a sign of enthusiasm outrunning governance.

The UK governance dimension

Three things make shadow AI a more acute problem in the UK and EU than in less regulated markets.

GDPR. Pasting client information into a public AI tool is a data transfer to a third-party processor. If that transfer was not on the lawful-basis register, the controller has a breach to declare. Most organisations have not asked this question for any tool their staff use casually.

The EU AI Act. General-Purpose AI obligations begin to bite from August 2026, including transparency requirements and prohibited-use enforcement. Firms relying on shadow AI tools without a register of which models touch which data are starting from negative ground.

Professional indemnity. PI policies typically require a duty of care and a disclosed methodology. AI-generated work product, used without disclosure, may sit outside cover. Insurers are starting to ask. Most policy renewals in the next eighteen months will include AI-related warranties.

None of these are theoretical. They are starting to feature in tenders, insurance reviews, and ICO-style audits. The organisations that have not yet asked "what AI is in our work product?" will be asked the question externally before long.

The communication collapse

There is a second-order effect that almost nobody is discussing.

AI makes it trivially easy to produce lengthy, structured, authoritative-sounding correspondence. The result is a new kind of escalation: one party sends an AI-enhanced email, the recipient lacks time to engage with it properly, so they feed it into their own AI and fire back an equally detailed response. Both parties believe they have communicated. Neither has read what the other wrote.

With email overload, we adapted by stripping responses back to one-liners and thumbs-up emojis. With AI, the pendulum has swung the other way. The same person who sent a thumbs-up last year now sends 600 words, and it took them less effort than the emoji did.

The length is no longer a sign of thought. It is a sign of delegation. And when decisions are being made on the back of correspondence that no human has fully read, that is a governance gap no policy document will catch.

Why can't traditional IT governance solve shadow AI?

Banning AI tools is demonstrably ineffective. The IBM research found that 60% of workers said hands-on training would increase their use of approved tools. The problem is not defiance. It is a gap between what employees need and what employers provide.

Three things make a difference:

Visibility first. You cannot govern what you cannot see. Before writing a policy, understand what your staff are actually using. Our free AI Readiness Calculator and Shadow AI Risk Assessment are five-minute starting points.

Compete, do not prohibit. If external tools are winning because the features are better, provide something better internally. Private AI deployment, trained on your methodology and inside your infrastructure, removes the incentive to go elsewhere.

Methodology over policy. A usage policy tells people what they cannot do. A methodology tells them how to use AI well: when to delegate, when to verify, when the task genuinely requires human attention. That distinction is where the Contour Methodology sits, building layers of context so that AI-assisted work is traceable, verifiable, and owned.

A practical starting point

For most organisations, the right first move is not policy. It is understanding. Before deciding what to ban or approve, find out:

The answers to those four questions will tell you more about your actual shadow AI exposure than any policy document will.

Previous technology waves settled because the underlying problem was containable. Move the data back inside the perimeter, and the risk reduces. Shadow AI does not work that way. The risk is not where the data goes. It is what comes back.

Frequently asked questions

What is shadow AI?

Shadow AI is the use of AI tools by employees without the knowledge, approval, or oversight of IT or management. This includes using ChatGPT, Copilot, Claude or Gemini with company data outside sanctioned channels. The result is data exposure, compliance gaps and audit-trail blind spots that the organisation cannot see and therefore cannot manage.

How is shadow AI different from shadow IT?

Shadow IT moves information that already exists. The risk is where the data goes. Shadow AI manufactures new information, including analysis, recommendations and client-ready documents. The risk is what comes back into the organisation's decisions, often without human review. Shadow IT could be solved with policy and perimeter. Shadow AI cannot, because the manufactured output cannot be retrieved once it has entered a decision.

What are the main risks of shadow AI?

Three categories matter most: data exposure (sensitive information shared with AI platforms outside lawful-basis registers), reputational exposure (unverified AI output entering client work product), and decision-quality exposure (decisions made on AI-generated correspondence that no human has fully read). For UK and EU organisations, GDPR and the incoming EU AI Act add specific compliance dimensions on top.

How do you manage shadow AI in the workplace?

Start with visibility, not policy. Identify what staff are actually using and for what tasks. Then provide a competitive sanctioned alternative, since prohibition does not work when the external tool is genuinely better. Finally, replace usage policy with methodology: the difference between a speed-limit sign and a driving instructor. One states a rule. The other builds judgement.

Is banning AI at work effective?

No. Reco found shadow AI persists for 400 or more days in many enterprises, and IBM found 60% of workers said hands-on training would increase their use of approved tools. The problem is not defiance, it is a capability gap. Bans concentrate use in less visible channels rather than reducing it.

---

Explore our free AI Security Training (UK and US versions, no signup required), the Shadow AI Risk Assessment, or the AI Readiness Calculator to understand where your organisation stands.

Related Articles

Private AI Deployment for Professional Services: The Complete Guide

Most professional services firms can't use generic cloud AI. Not because it's not good enough — but because their clients' data can't leave the building. Private AI deployment solves this. Here's how it works.

Responsible AI in High-Stakes Consulting: Principles from Production Deployment

Using AI in high-stakes consulting is ethically different from using it to recommend products. When decisions affect careers, organisations, and financial outcomes, the standards must be different too. Here are five non-negotiable principles from production deployment — including what we've got wrong.

8 AI Mistakes Costing UK Small Businesses £50K+ (And How to Avoid Them)

AI spending is up six-fold, yet UK small business adoption crashed from 42% to 28%. Discover the 8 expensive mistakes costing £5K-£50K+ each to fix—and learn how businesses getting it right avoided these patterns.